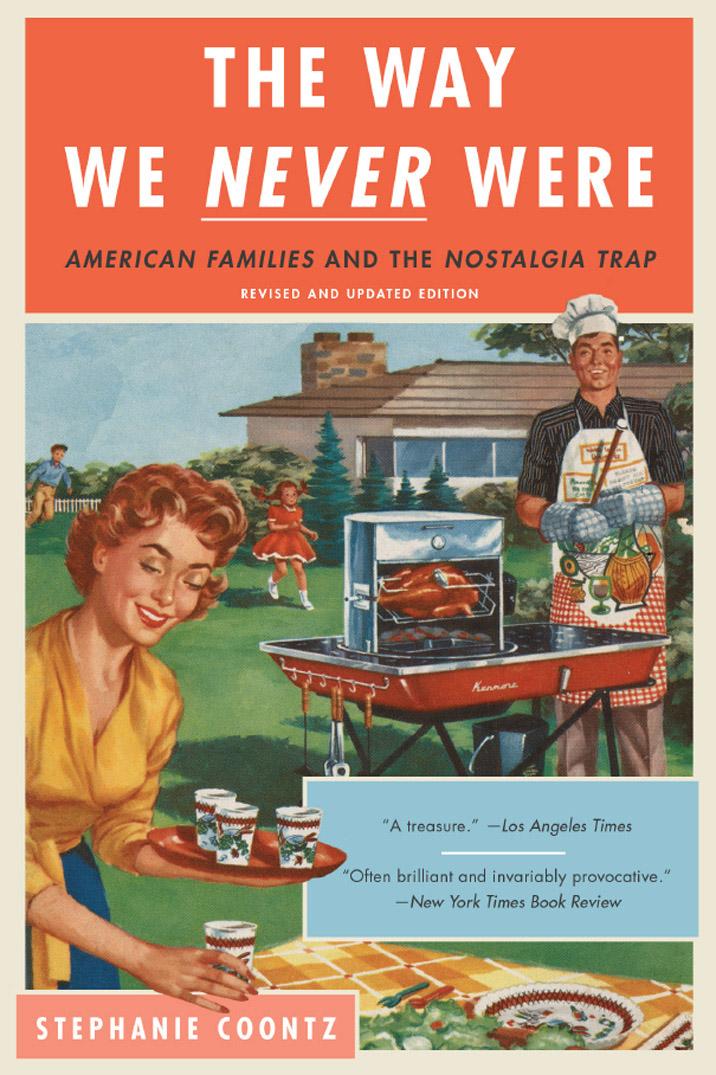

Stephanie Coontz

The Way We Never Were

American Families and the Nostalgia Trap

Praise for The Way We Never Were

Introduction to the 2016 Edition

1. The Way We Wish We Were: Defining the Family Crisis

The Elusive Traditional Family

The Complexities of Assessing Family Trends

Wild Claims and Phony Forecasts

Negotiating Through the Extremes

2. “Leave It to Beaver” and “Ozzie and Harriet”: American Families in the 1950s

The Novelty of the 1950s Family

A Complex Reality: 1950s Poverty, Diversity, and Social Change

More Complexities: Repression, Anxiety, Unhappiness, and Conflict

Contradictions of the 1950s Family Boom

Teen Pregnancy and the 1950s Family

The Problem of Women in Traditional Families

3. “My Mother Was a Saint”: Individualism, Gender Myths, and the Problem of Love

Social Dependence and Interdependence in Other Cultures

The Dark Side of Interdependence: Dependency and Subjugation

Freedom Struggles and the Rise of Individual Contract Rights

The Dark Side of Independence: Freedom and Fragmentation

Rational Egoism for Men, Irrational Altruism for Women

The Growing Importance of Love

The Family, Masculine and Feminine Identity, and the Contradictions of Love

4. We Always Stood on Our Own Two Feet: Self-Reliance and the American Family

A Tradition of Dependence on Others

Self-Reliance and the American West

Self-Reliance and the Suburban Family

The Myth of Self-Reliant Families: Public Welfare Policies

Subsidizing Family Housing: Hidden Inequities, Unintended Consequences, and Cost Overruns

Debating Family Policy: Why It’s So Hard

5. Strong Families, the Foundation of a Virtuous Society: Family Values and Civic Responsibility

Private Values Versus Public Values

Traditional Restraints on American Individualism

The First Gilded Age: Emergence of a New Conservatism

The New Focus on Family Morality

The Seamy Side of Family Moralism

Family Idealization and the Collapse of Public Life

Private Life and Public Scandal: The “New Moralism” Then and Now

The Fragility of the Private Family

6. A Man’s Home Is His Castle: The Family and Outside Intervention

Privacy and Autonomy in Traditional American Families

The Formalization of Outside Intervention

Family Privacy Before the Civil War

Family Privacy and the Laissez-Faire State

Progressive Reform: Family Preservation and State Expansion in the Early Twentieth Century

The Irony of State Intervention

Recent State Policies: Does the Government Support Monarchy or Democracy in Modern Families?

Family Autonomy, Privacy, and the State

7. Bra-Burners and Family Bashers: Feminism, Working Women, Consumerism, and the Family

The Curious History of Mother’s Day

1900 to World War II: Steady Growth in Married Women’s Employment

The 1950s—a Turning Point in Women’s Work

Women’s Work in the 1960s and 1970s

Working Women and the Revival of a Women’s Rights Movement

Consumerism and Materialism in American Life

The Origins of Consumerism, 1900 to the 1960s

Consumerism, the Mass Media, and the Family Since the 1960s

The Impact of Consumerism on Personal Life

Consumerism, the Work Ethic, and the Family

The Changing Role of Marriage and Childrearing in the Life Course

Changes in the Roles and Experiences of Youth

The Technological Revolution in Reproduction: Separation of Sex from Procreation

The Changing Role of Sexuality in Society

The First Sexual Revolution and Its Impact

Assessing the Impact of the Second Sexual Revolution

Teenage Mothers and the Sexual Revolution

Finding Our Way Through the New Reproductive Terrain

Putting Our Family Maps in Perspective

9. Toxic Parents, Supermoms, and Absent Fathers: Putting Parenting in Perspective

What Is a Normal Family and Childhood?

Maternal Employment and Childrearing

The Myth of Parental Omnipotence

When the Risks Become Overwhelming

African American Families in U.S. History

The Strengths of Black Families

The Postwar Experience of African American Families

Black Families and the “Underclass”

Black Family “Pathology” Revisited

Blaming the Family: A Gross Oversimplification

The Deteriorating Position of Young Families

Modern Families and the Collapse of the “American Dream”

Economic Polarization, Personal Readjustment, and the Unraveling of the Social Safety Net

The Values Issue in Modern Families: Erosion of the American Conscience

Cynicism and Self-Centeredness: Not Just a Family Affair

Epilogue to the 2016 Edition: For Better AND Worse: Family Trends in the Twenty-first Century

[Front Matter]

Praise for The Way We Never Were

“More than twenty years ago, in The Way We Never Were, Stephanie Coontz cut through all the bizarre stereotypes we carry with us about marriage and the family in America. Now, in a brilliant revision, she jolts us once again by bringing us up to date on when people marry, how income inequality affects family life, and why we have made so much progress on issues of gay marriage and so little on issues of poverty and women’s reproductive rights. A terrific read with amazing new information!”

—WILLIAM H. CHAFE, Alice Mary Baldwin Professor of History, Duke University, and former president, Organization of American Historians

“Coontz presents fascinating facts and figures that explode the cherished myths about self-sufficient, happy, moral families.”

—Newsday

“Historically rich, and loaded with anecdotal evidence, The Way We Never Were effectively demolishes the normal, traditional nuclear family as neither normal nor traditional, and not even nuclear.”

—Nation

“A wonderfully perceptive, myth-debunking report. . . . An important contribution to the current debate on family values.”

—Publishers Weekly

“Clear, incisive, and distinguished by Coontz’s personal conviction and by its vast range of cogent examples, including capsule histories of women in the labor force and of black families. Fascinating, persuasive, politically relevant.”—Kirkus Reviews

“This small book has had an outsized influence on the way social scientists think about the recent history of the American family. It remains the starting point for anyone who hopes to understand how contemporary family life came about and where we may be headed in the future.”

—FRANK FURSTENBERG, emeritus Zellerbach Family Professor of Sociology, University of Pennsylvania

“There is no better commentary on the status and processes of American families than The Way We Never Were. Stephanie Coontz writes about the realities of family life in an uncompromising way that integrates evidence-based research with the souls and everyday lives of kin within and across generations and across time and space. In my family sociology courses a spontaneous awakening occurs for students who read this book for the first time. They never look at families the same way, which is a game changer as they consider family life in their futures and question the meaning of families in their present lives. Stephanie Coontz has given the field a true gift that guides us in a journey of understanding the evolution of family life in real time and under real circumstances. Illuminating, provocative, and a must read for all!”

—LINDA BURTON, dean of social sciences and James B. Duke Professor of Sociology, Duke University

“Stephanie Coontz has her finger on the pulse of contemporary families like no one else in America. In this book, she busts numerous myths about families in the past and clearly explains what is going on in today’s families.”

—PAULA ENGLAND, 2015 president, American Sociological Association

“A powerful antidote to the misleading myths and misplaced nostalgia that too often dominate discussions of family life, this essential book tells the true history of today’s extraordinarily diverse families. Drawing upon the most recent research, the nation’s foremost historian of marriage explains why family life changed so radically in the course of a single generation, presenting a remarkably balanced perspective on the losses and gains that have accompanied this revolution.”

—STEVEN MINTZ, professor of history, University of Texas at Austin

“Her four-page survey of African American families in U.S. history is a heartbreaking essay in itself. It ought to be required reading for anyone who pontificates on ‘pathology’ in the black family.”

—San Francisco Chronicle

“[Coontz] persuasively dispels the myths and stereotypes of ‘traditional’ family values as the product of the postwar era.”

—Library Journal

“Highly instructive reading for any number of political candidates.”

—Washington Post

[Title Page]

[Copyright]

Copyright © 1992 by Basic Books, a Member of the Perseus Books Group

Introduction and Epilogue to 2016 edition copyright © 2016 by Basic Books.

All rights reserved. Printed in the United States of America. No part of this book may be reproduced in any manner whatsoever without written permission except in the case of brief quotations embodied in critical articles and reviews. For information, address Basic Books, 250 West 57th Street, 15th Floor, New York, NY 10107.

Books published by Basic Books are available at special discounts for bulk purchases in the United States by corporations, institutions, and other organizations. For more information, please contact the Special Markets Department at the Perseus Books Group, 2300 Chestnut Street, Suite 200, Philadelphia, PA 19103, or call (800) 810-4145, ext. 5000, or e-mail special.markets@perseusbooks.com.

Library of Congress Cataloging-in-Publication Data

Coontz, Stephanie.

The way we never were : American families and the nostalgia trap / Stephanie Coontz.

p. cm.

Includes bibliographical references and index. 1.Family—United States—History—20th Century 2.United States—Social conditions. 3.Nostalgia.I. Title.

HQ535.C643 1992 91-59009 306.85'097—dc20 CIP

978-0-465-09884-2 (2016 e-book)

10 9 8 7 6 5 4 3 2 1

Introduction to the 2016 Edition

MUCH HAS CHANGED FOR AMERICAN FAMILIES SINCE The Way We Never Were first appeared in 1992. The most dramatic transformation has been the cultural and legal about-face regarding same-sex marriage. The prospect of legalized same-sex marriages seemed far off even when the second edition was published in 2000. As late as 2004, 60 percent of Americans still opposed granting gays and lesbians the right to marry, and in 2013 thirty-five states had laws limiting marriage to heterosexual couples.

Yet by 2014, 138 polls by twenty-one different polling organizations all found majorities supporting marriage equality. Then on June 28, 2015, the U.S. Supreme Court ruled 5–4 that marriage was a fundamental right and could not be denied to gays and lesbians. Hundreds of thousands of gay and lesbian couples across the country, many raising children, now enjoy full marital and parental rights. Unfortunately, 52 percent of the LGBT population, married and single alike, still live in states where they are subject to job or housing discrimination because of their sexual orientation or gender identity. And legalization of same-sex marriage does not help the disproportionate number of LGBT youth who become homeless after being rejected by their heterosexual parents. Nevertheless, the legalization of same-sex marriage represents a stunning turnaround from the laws and attitudes of the early 1990s.[1]

Other changes reflect the persistence of family trends that were already well established by 1992. Between 1960 and 1990, the average age at first marriage rose from twenty to twenty-four for women and from twenty-two to twenty-six for men. By 2014, it had climbed further to twenty-seven for women and twenty-nine for men. Many more people now delay marriage until their thirties or forties, and some researchers believe that a full quarter of today’s young adults may reach their mid-forties to mid-fifties without ever having been married, although unmarried cohabitation has grown more common.[2]

In 1992, living together before marriage was not yet the norm. As of 1987, only one-third of women aged nineteen to forty-four had ever cohabited. By 2013 that had doubled, and most marriages now begin after the couple is already living together. But living on one’s own may be growing even faster than cohabitation. Today almost 30 percent of American households comprise just one person.[3]

Many family trends that were in the news when this book was originally published have continued but migrated to new sectors of the population, taken unanticipated forms, or started producing different outcomes than in the past.

The typical unwed mother used to be a teenager living with one or both parents. Now she is a woman in her twenties or thirties who lives with the baby’s biological father. Almost 60 percent of out-of-wedlock births today, up from 40 percent in 2002, are to cohabiting couples, not to women living on their own.[4]

Overall, the proportion of children who are born to unwed mothers instead of married ones climbed from 20 percent in 1980 to 30 percent in 1992 and to more than 40 percent in 2015. But that doesn’t mean that 40 percent of single women are bearing children out of wedlock. Birthrates to unmarried women have indeed risen dramatically over the past fifty years. However, there are also many more unmarried women in the population than in the past, and birthrates for married women have been falling. All these factors work together to keep the ratio of unwed to married births high even though the percentage of unwed women who gave birth actually declined slightly during each of the six years between 2008 and 2014.[5]

The “rules” of marriage and divorce have been changing rapidly in the past twenty-five years. When I began working on this book, couples who lived together before marriage had a higher probability of divorce than couples who married directly. Today cohabitation no longer raises the risk of divorce. It is possible that in the near future we could even see a reversal, as has occurred in several other countries, where cohabiting before marriage lessens the chance of divorce.[6]

Up until 1995, couples who lived and had a baby together but married only afterward were 60 percent more likely to divorce than couples who waited until they married to start their family. But since 1997, cohabiters who have a baby while living together and then go on to marry have no higher chance of divorce than couples who wait until after marriage to have a child.[7]

At one time, marrying at an older age than average raised a woman’s chance of divorce. Today, every year a woman postpones marriage, right into her thirties, reduces her risk of divorce. When University of Maryland sociologist Philip Cohen recently analyzed a woman’s probability of divorce by her age at first marriage, he found that the chance of divorce goes down steadily every year until age thirty-three or thirty-four. It then ticks up slightly for ages thirty-five to thirty-nine, but plummets to new lows for those marrying for the first time between the ages of forty and forty-nine.[8]

One disturbing trend has dramatically reversed itself since the first edition of this book was published. The 1980s saw a huge spike in youthful violence, and by the 1990s politicians of all stripes were warning that rising rates of divorce and unwed motherhood were leading to an ever-escalating cycle of violence, crime, and chaos. In 1995, one respected criminologist predicted that society would soon be overrun by a wave of remorseless “superpredators.”[9]

But in reality the opposite occurred. Between 1994 and 2012, juvenile crime rates plummeted by more than 60 percent, even as the proportion of children born out of wedlock continued to rise. By 2013, according to the FBI’s Uniform Crime Reporting Statistics, the murder rate was lower than at any time since the agency began keeping records in 1960.[10]

To predict a continuous decline in violence for the next twenty years would be just as foolish as it was in 1995 to predict a continuous increase. Even after more than two decades of decline, the United States still has a far higher murder rate than other wealthy countries. And in the first half of 2015, thirty cities, including Milwaukee, Baltimore, St. Louis, New Orleans, and Chicago, saw a new surge of violence, while in other cities murder rates continued to fall.[11]

Whatever the future holds, however, the evidence of the last three decades refutes the idea that being raised by a single parent in and of itself “causes” violent behavior or personal dysfunction in children.

Some trends of the 1980s and early 1990s initially seemed to recede, only to later reassert themselves with a vengeance. In the second half of the 1990s, during Bill Clinton’s presidency, America experienced a period of economic expansion that raised real wages and boosted employment rates. When the second edition of this book appeared in 2000, I thought that my chapters on deteriorating socioeconomic conditions might soon be irrelevant.

Yet even during the height of that economic boom, two-thirds of all income gains went to the top 10 percent of earners. Income instability in the 1990s was five times higher than in the early 1970s, with adult Americans facing a much higher chance of experiencing a spell of poverty than in the 1960s and 1970s. And in light of the subsequent resumption of wage stagnation or decline for the bottom 80 percent of Americans and the continuing rise in the share of income going to the wealthiest Americans, those chapters now seem especially pertinent.[12]

By contrast, I was badly off the mark in my predictions about the prospects for marriage equality and expanded reproductive rights. I wrote in the introduction to the 2000 edition that the controversy over gay and lesbian marriage seemed likely to persist, but that the long conflict over abortion and contraception might soon be mitigated by inventions such as the morning-after pill, which prevents a fertilized egg from implanting itself, and RU486, the pill that makes an early abortion easier and more private.

It turns out I got things exactly backward. Support for same-sex marriage soared, from barely a quarter of the population to almost 60 percent, and marriage equality became the law of the land in 2015. But in 2014, the Supreme Court struck down the section of the Affordable Care Act that required employers to cover certain contraceptives for their female employees, granting a religious exemption to certain types of corporations. Many legislators and business owners have tried to block distribution of the morning-after pill, refusing to accept the medical and legal fact that it is not an abortifacient because it acts to prevent implantation of a fertilized ovum rather than to dislodge an implanted embryo. And the past decade has seen vigorous attempts to roll back women’s access to contraception and abortion, including a massive campaign to defund and discredit Planned Parenthood, an organization that Republican and Democratic political leaders alike once endorsed.

Amid these many transformations, however, one thing has not changed since my book first appeared in 1992—the tendency for many Americans to view present-day family and gender relations through the foggy lens of nostalgia for a mostly mythical past.

Nostalgia is a very human trait. When school children returning from summer vacation are asked to name good and bad things about their summer, the lists tend to be equally long. As the year goes on, however, if the exercise is repeated, the good list grows longer and the bad list gets shorter, until by the end of the year the children are describing not their actual vacations but their idealized image of “vacation.”

So it is with our collective “memory” of family life. As time passes, the actual complexity of our history—even of our own personal experience—gets buried under the weight of the ideal image.

Selective memory is not a bad thing when it leads children to forget the arguments in the back seat of the car and to look forward to their next vacation. But it’s a serious problem when it leads grown-ups to try to re-create a past that either never existed at all or whose seemingly attractive features were inextricably linked to injustices and restrictions on liberty that few Americans would tolerate today.

One example of how discussions of family life are still distorted by myths about the past is the question of how marriage has evolved historically. Both sides in the Supreme Court decision extending marriage rights to same-sex couples demonstrated confusion on this issue. In his dissent from the majority opinion, Chief Justice John Roberts wrote, “For all . . . millennia, across all . . . civilizations, ‘marriage’ referred to only one relationship: the union of a man and a woman.” Its primordial purpose, Roberts asserted, was to make sure that all children would be raised “in the stable conditions of a lifelong relationship.”[13]

These assertions are simply not true. The most culturally preferred form of marriage in the historical record—indeed, the type of marriage referred to most often in the first five books of the Old Testament—was actually of one man to several women. Some societies also practiced polyandry, where one woman married several men, and some even sanctioned ghost marriages, where parents married off a son or daughter to the deceased child of another family with whom they wished to establish closer connections.[14]

The most common purpose of marriage in history was not to ensure children had access to both their mother and father but to acquire advantageous in-laws and expand the family labor force. The wishes of the young people being matched up and the well-being of their offspring were frequently subordinated to those goals. That subordination was enforced through the institution of illegitimacy, which functioned to deny parental support to children born of a relationship not approved by the kin of one or both parents or by society’s rulers. In Anglo-American common law, a child born out of wedlock was a filius nullius, a child of nobody, entitled to nothing. Until the early 1970s, several American states denied such children the right to inherit from their biological father even if he had publicly acknowledged them or they were living with him.[15]

Justice Anthony Kennedy, meanwhile, wrote an eloquent majority opinion in support of marriage equality. Labeling marriage a “union unlike any other in its importance” to two committed persons, Kennedy argued that gays and lesbians deserved to marry because lifelong unions have “always . . . promised nobility and dignity to all persons” and “marriage is essential to our most profound hopes and aspirations.”[16]

These claims are also at odds with historical reality. For thousands of years, marriage conferred nobility and dignity almost exclusively on the husband, who had a legal right to appropriate the property and earnings of his wife and children and forcibly impose his will upon them. As late as the 1970s, most states had “head and master” laws, giving special decision-making rights to husbands, while the law explicitly defined rape as a man’s forcible intercourse with a woman other than his wife.

Today, a marriage based on mutual respect and commitment is a wonderful thing for both partners and for any children they have. But a bad marriage is often worse than singlehood for the health and well-being of most family members. Insisting, as Justice Kennedy does, that marriage is essential to fulfill “our most profound hopes” makes it difficult for society to respond to the needs—or recognize the contributions—of the growing number of singles and unmarried couples in America. It may also encourage people to expect too great a transformation in their well-being from getting married, while frightening or stigmatizing those who have good reason to divorce.[17]

As I explain in chapter 5, marriage has not always been the primary route to achieving meaning in people’s lives. Early Christian theologians, for example, valued unwed celibacy much higher than the wedded state, explaining that marriage distracted men and women from their duties to God and to the larger Christian community. Recent research offers some secular justification for such concerns: Married individuals are less likely than their unmarried counterparts to provide time and assistance to aging relatives, neighbors, and friends.[18]

The flip side of exaggerating the historical benefits of marriage has been a persistent tendency to blame poverty and other social ills on divorce and unwed motherhood, even though poverty and material hardship were more widespread in the marriage-centric 1950s than they are today. People forget that women and children bore the brunt of poverty within many “traditional” two-parent families just as surely as they do in modern female-headed households. Researchers across the world often find two different standards of living in the same married-couple household, with the wife and children doing without in order to give the husband first call on the family’s economic resources.[19]

In chapter 11 I discuss what’s wrong with the claim that unwed childbearing is the primary cause of poverty, economic insecurity, and inequality. Recent research bears out my argument. A 2015 study concluded that overall, between 1979 and 2013, income inequality was more than four times as important as family structure in explaining the growth of poverty. Another recent study concludes that since 1995, the role of single parenthood in contributing to economic instability has diminished even more. Instead, the authors emphasize, we have seen a “broadly-based increase in income insecurity that is concentrated neither among low-skill workers nor single-parent families.”[20]

Yet politicians and pundits continue to recycle the myth that poverty and inequality are the result of marital arrangements rather than larger socioeconomic forces. A 2012 report for the Heritage Foundation by Robert Rector, “Marriage: America’s Greatest Weapon Against Child Poverty,” insists, even after the Great Recession plunged so many married families into poverty, that “the principal cause [of child poverty] is the absence of married fathers in the home.” (You will find Rector quoted in this book saying exactly the same thing in 1989.) And a 2014 publication of the U.S. House Budget Committee, “The War on Poverty: 50 Years Later,” totally misrepresents the accomplishments of the War on Poverty (some of which I outline in chapters 4 and 10), before joining the chorus with the claim that “the single most important determinant of poverty is family structure.”[21]

THE TENACITY OF SUCH MYTHS ABOUT FAMILIES MAKES MOST OF what I wrote in the first edition of this book still timely. Yet the context in which these myths operate and the realities they obscure have shifted. What follows is an overview of the book’s chapters in light of recent developments and new research findings. I briefly indicate where these confirm or modify my arguments and provide up-to-date citations so readers can pursue any topic that interests them. Those interested in supplementing the historical topics with related articles on current trends and debates might consult the weekly briefing reports issued by the Council on Contemporary Families.[22]

The first chapter examines a few common myths about family forms and features in past times, challenging some of what I termed the “wild claims and phony forecasts” so common in the mass media. For example, I refute a claim by William Mattox, a speechwriter for several members of Congress, that parents in the 1990s were spending 40 percent less time with their children than parents did in 1965. Yet in 1999 Time magazine reiterated that claim, and even today I get phone calls from reporters convinced that the children of today’s employed moms are getting much less attention than in the past. With fifty years of time-use studies under our belt, we can say decisively that this is untrue.[23]

It appears that parental time with children did decline between 1965 and 1975, as more women entered the workforce. In that era, fathers had not yet stepped up to the plate at home, and families were struggling to find a new equilibrium.

But after 1985, even as women’s labor participation and work hours continued to increase, the child-care hours put in by mothers rose to the point that those hours were significantly higher than in 1975 and 1965. Meanwhile, fathers’ child-care time tripled. Full-time homemakers do spend more time on child care than employed moms, and married mothers do spend more time than single mothers, but the differences are surprisingly small (between three and five hours a week, depending on the study). And today’s single and working moms spend more time with their children than married homemaker mothers did back in 1965.[24]

What about the persistent claim that marriage is a dying institution? People have been predicting the death of marriage for almost a century. In 1928, John Watson, the most famous child psychologist of that era, predicted that, given existing trends in divorce, marriage would be dead by 1977. In 1977, sociologist Amatai Etzioni declared that if current trends continued, by the 1990s “not one American family will be left.” In 1999, the National Marriage Project announced breathlessly that the marriage rate had fallen by 43 percent since 1960. And in 2010, a Pew Research Center poll found that 40 percent of Americans said marriage was “becoming obsolete.”[25]

Let’s look a little closer at the supposed collapse of marriage. First of all, the marriage rate is calculated on the basis of how many single women eighteen years and older get married each year. In 1960 half of all women were already married before they turned twenty-one. Today, the average age of marriage for women is twenty-seven, so it’s no surprise that the percentage of women over eighteen who are married is much lower.

But most people eventually marry. As of 2013, more than 80 percent of fifty-year-olds—people who went to high school in the 1980s—were married. And those who are still unmarried at age forty or fifty have much better prospects for marrying, should they want to, than in the past.

In 1960 only 2.8 percent of women and 3.5 percent of men married in their forties and fifties. At my request, sociologist Philip Cohen, working with a cross section from the 2011–2013 American Community Survey, calculated how many women who had never been married by the time they turned forty went on to marry in the next ten years. On the basis of those calculations, he projects that 23 percent of women who reach age forty without having married will wed in the following ten years. For women who are college graduates, that rises to 26 percent.[26]

Interestingly, among forty-year-old, never-married black women, the percentage who go on to wed in the next ten years is even higher: 31 percent. There is a very wide gap in first-marriage rates between black and white women in their twenties and early thirties, but that begins to narrow at older ages. Cohen projects that about 85 percent of white women and 78 percent of black women will be married or will have been married by the time they reach age eighty-five. This is lower than the 96 percent of white and 91 percent of black women who were ever married at age eighty-five-plus as of 2010, and as we shall see, the late convergence in black and white women’s marriage chances reflects some disturbing trends in the life prospects of young black men and in black children’s chances of being raised in a two-parent family. But it certainly indicates that marriage is not on the verge of extinction.

As for the 40 percent of Americans who told pollsters in 2010 that marriage was “becoming obsolete,” most of them simply meant that marriage is no longer an institution you have to enter in order to have a respectable or satisfying life. Almost 60 percent of singles of all ages said they wanted to marry, with only 12 percent saying they did not. And in a separate poll, fully 70 percent of unmarried eighteen- to twenty-nine-year-olds said they wanted to get married, even though most of them believed it was possible to have a happy life without marriage. Finally, 96 percent of married respondents in the 2010 Pew poll said their relationship was as close as or closer than that of their parents. A majority (51 percent) said it was closer.[27]

There are many social and cultural consequences of a rising age of marriage, and rates of nonmarriage are clearly on the increase as well. But we cannot evaluate these trends realistically if we exaggerate them to suggest that marriage is about to disappear.

It is also important to note that many of the trends that led to predictions of the collapse of marriage have since slowed or leveled off. The divorce rate has actually fallen, although, as I explain in the epilogue, most of that decline is concentrated among highly educated Americans. Overall, however, 70 percent of people who married for the first time in the early 1990s were still together at their fifteenth anniversary, up from 65 percent of those who wed in the 1970s and 1980s. Economist Justin Wolfers reports that marriages formed in the 2000s seem to be on track to divorce even less frequently. The divorce rate, which peaked in 1979 at 22.8 divorces per 1,000 married women, was down to 17.6 women per 1,000 married women in 2014.[28]

Nevertheless, people do live longer portions of their lives outside marriage than in the past, and when they marry the union does not always last until old age. It no longer makes sense to organize family law and social policy on the assumption that marriage is the only place where people enter into commitments that must be recognized or incur obligations that need to be enforced. For example, nearly as high a percentage of cohabiting partners are raising children as married ones.[29]

Modernizing our legal assumptions about family life makes more sense than trying to force people back into lifelong marriages, an effort that is unlikely to succeed and could well create more difficulties than it would solve. While there are many real problems associated with America’s high divorce rates, the ability to leave a marriage more easily has helped not only those escaping bad marriages but also many who remain married, because it gives the partner who wants change more negotiating power. A study of what happened as various states adopted no-fault divorce laws in the 1970s and 1980s found that in the first five years following adoption, wives’ suicide rates fell by 8 to 13 percent, and domestic violence rates within marriage dropped by 30 percent.[30]

Such trade-offs are the stuff of family history. Losses in one area lead to gains in another, and vice versa. New challenges arise in the process of solving old problems. But the historical record is clear. Although there are many things to draw on from our past, no family system has ever immunized people against economic loss, social problems, or personal dysfunction.

This is certainly true of the most atypical family system in American history, the post–World War II male-breadwinner family that is the subject of chapter 2. It is easy to understand why many people harbor nostalgia for the 1950s. Job security—at least for white men—was far greater than it has been for the past forty years. Decent housing was much more affordable for a single-earner family. Unlike in recent decades, real wages were rising for the bottom 70 percent of the population as well as for the top earners, and income inequality was falling.

But most of these positive developments flowed from the economic and political support systems I describe in chapters 2 and 4, not from the internal arrangements of 1950s marriages, which most modern Americans would find unacceptable and which contained contradictions that helped overturn the gender and sexual norms of that era. My students are dumbfounded when they read the advice books aimed at women in that era or when they watch episodes of Father Knows Best, like the one titled “Betty, Girl Engineer,” where the family’s teenage daughter learns it is foolish to try to do a “man’s job” because she will be treated much better if she dons a pretty new dress and waits to be asked out on a date.

Psychiatrists in that era insisted that the “normal” woman found complete fulfillment by renouncing her personal aspirations and identifying with her husband’s achievements. Something was badly wrong, they warned, if a woman or man “usurped” any rights or duties belonging to the other sex. In movies and on TV, you could generally tell that a family was seriously dysfunctional if the man was shown washing dishes or—worse yet—running a vacuum cleaner.[31]

Chapter 3 discusses the origins, functions, and contradictions of such polarized ideas about gender. Far from being natural or traditional, the notion of males and females as opposites, with women focused on the family and men on the larger world, emerged only in the early nineteenth century, as the production of goods and services moved away from the household, thereby physically separating the work involved in raising and nurturing a family from the work involved in provisioning it. I explain how new cultural norms assigned bread-winning and ambition to men and homemaking and altruism to women, with the expectation that love would bridge the widening gap between the experiences and values of the two.

That notion of love as a union of opposites has become increasingly problematic for heterosexual couples in recent decades. Today, fewer than one-third of Americans—an all-time low—believe that men and women have dissimilar capabilities and should play different roles at work and at home. Yet men and women are still bombarded by cultural norms that emphasize difference as the basis of erotic desire. Not surprisingly, heterosexual couples are often torn between their new values and older scripts for behavior. The advent of same-sex marriage may provide new models for how heterosexual couples can combine equality, intimacy, and sexual desire.[32]

In chapter 4, I discuss the myth of self-reliance. People have always depended on support systems beyond the family, including government. This is especially evident when we look closely at the supposedly independent frontier families and the male-breadwinner families of the 1950s, both of which in fact relied on extensive government subsidies. But there has been a long-standing myth that only minority families need or benefit from government assistance.

In the 1980s and 1990s, the image of the “welfare queen” epitomized the myth that only African Americans relied on government assistance. The political backlash against social welfare programs that this notion helped fuel led to the abolition of the Aid to Families with Dependent Children (AFDC) program and passage of the Personal Responsibility and Work Opportunity Reconciliation Act (PRWORA) in 1996. This legislation replaced AFDC with a program called Temporary Assistance to Needy Families (TANF).

TANF abolished AFDC’s guarantee of cash assistance to all eligible poor families. Instead it gave states a fixed pool of money for income-support and work programs, along with considerable leeway about how to spend it. Some states adopted the most generous policies permitted by federal law, while other states made their programs far more restrictive.

In most states, recipients can now get work credit for only one year of vocational training, so recipients trying to complete college lose child-care vouchers and cash assistance. The act also imposed strict lifelong limits on the amount of aid a family or individual can receive, no matter how long a person works between spells of hardship. And as of 2015, Congress had not adjusted the funding of the basic grant since 1996, causing its real value to fall by one-third.

In 1992, I criticized the evidence that was being used to attack AFDC. Yet the first four years of the TANF program seemed promising. Welfare caseloads fell sharply, and as of 1999, somewhere between 61 and 87 percent of adults leaving public assistance had found jobs. Though few of those low-wage jobs paid enough to move a family out of poverty, the number of families with children living in poverty did fall.[33]

But these successes were due partly to the rapidly expanding job market in the temporary economic boom of the late 1990s and partly to the 1990 and 1993 increases in the Earned Income Tax Credit (EITC), a program that puts extra income in the hands of low-wage workers and has become a very successful antipoverty program for such individuals. However, the EITC could not help—and TANF often would not help—people who could not find work, who had trouble keeping jobs because of physical or mental health issues or lack of child care, or who had exhausted their lifetime limits due to sporadic spells of unemployment. So even during the economic expansion from 1996 to 2000, the poorest families, especially single-mother families, lost ground.[34]

After the economic expansion ended in 2000, things got considerably worse. While nearly half of all Americans now receive some form of government benefits, over the past several decades a declining portion has gone to the poorest families. In 1995, the AFDC program lifted more than 2 million children out of deep poverty, accounting for 62 percent of children who would otherwise have been classified as severely impoverished. By 2010, TANF lifted only 629,000 children—just 24 percent—out of deep poverty.[35]

Despite the sharp increase in unemployment and economic insecurity among all racial and ethnic groups during the Great Recession, the racialized myth that only the (black) poor need government help continues. In the elections of 2012 and 2016, politicians used more toned-down phrases than in the 1990s, criticizing unspecified groups who supposedly wanted “free stuff” from the government. But since these remarks were usually delivered to or about black voters, the message came through loud and clear.

As I show in chapter 4, however, every American gets “free stuff” from government. The home mortgage interest deduction, which largely benefits the top 20 percent of income earners, now costs roughly the same amount as the food stamps program, about $70 billion a year. And a 2012 New York Times report calculated that federal and state governments had given away $170 billion in tax breaks and incentives to businesses without demanding any accountability as to whether they actually produced long-term jobs or even stayed around long enough to make up for the tax losses the communities incurred.[36]

Meanwhile, many states try to balance their budgets on the backs of the poor. In June 2015, for example, the Missouri state legislature voted to cut thousands of families from the state’s cash assistance program. They reduced the state lifetime limit for Temporary Assistance for Needy Families from sixty to forty-five months, slashed cash benefits by half for those who do not find jobs, and redirected a significant portion of welfare funds toward programs that encourage marriage and alternatives to abortion.[37]

Chapter 4 also describes how the myth of personal self-reliance helped produce the savings and loan crisis of the 1980s, a crisis that was in many ways a dress rehearsal for the 2007–2009 financial crisis. Yet legislators remain wedded to the historically disproven notion that subsidies to banks and corporations create jobs while subsidies to families create only laziness.

If nuclear families were not traditionally expected to be economically self-reliant, neither were they expected to be emotionally self-reliant. In chapter 5, I show that not until the late nineteenth century were Americans urged to make the nuclear family the central locus for their loyalties and obligations. The new ideal was quite different from views of civic responsibility in the early republic, in which being a good “family man” was two steps below the most esteemed level of citizenship.[38]

One consequence of the new family-centric ideal was a troubling narrowing of moral discourse, with people being judged primarily on the basis of their sexual and family behavior, not their civic, economic, or political actions, even though the latter often have farther-reaching consequences. For example, during the Monica Lewinsky scandal of Bill Clinton’s presidency, Clinton received far more censure for his dishonesty about his sexual behavior than for another piece of moral evasiveness: his politically motivated decision to ignore the recommendation of a bipartisan panel that providing needles to drug addicts would save lives and curtail the spread of HIV without increasing drug addiction.

Today we are even more preoccupied with sexual exposés than in the 1990s. Many people on both the left and the right prefer to focus on the sexual hypocrisies or lapses of their opponents rather than to analyze the morality of their political positions or economic transactions.

Chapter 6 traces the complex historical relationship between family privacy, individual autonomy, law, and the state. It shows that families have never been immune from outside interference. In the early years of America, slaves and white indentured servants were often denied a family life. Even free white and black families were subject to strict oversight of their internal affairs by local governments. But in that era many diverse family forms coexisted, and there was as yet no coherent national program to promote a single family arrangement.[39]

Over the course of the nineteenth century and the first two-thirds of the twentieth, however, the federal government made a concerted effort to establish one particular family form as the norm: a nuclear family headed by a man whose wife was legally and economically dependent on him.

Officials forced Native American extended families off their collective property and onto single-family plots. They made Indian men the public representatives of families, ignoring the traditional role of women in community leadership, and placed Indian children in boarding schools to eradicate traditional Native American values. They also moved to squash the numerous utopian communities that experimented with celibacy or with group marriage in the early nineteenth century and denied Utah statehood until the Mormons renounced their advocacy and practice of polygyny in 1896. In the twentieth century, immigration laws, tax policies, zoning regulations, unemployment insurance, and welfare policies were all constructed in ways that penalized family and household arrangements that did not conform to the male-breadwinner nuclear model.[40]

In chapter 7, I trace women’s increasing participation in the labor force and its relation to the rise of feminism, arguing that women’s (re)entry into the workforce had deep historical roots, and that most women, including wives and mothers, are in the workforce to stay. The percentage of working moms continued to rise throughout the 1990s, with the share of stay-at-home mothers hitting an all-time low of 23 percent in 1999. However, the proportion of homemaker moms rebounded slightly between 2000 and 2004 and then again between 2010 and 2012, reaching 29 percent. This led some observers to suggest that educated women were “opting out” of careers in order to stay home with their children. But in fact most of the increase was driven by a growing number of mothers who could not find jobs. By 2015, a few more years into the economic recovery, the Pew Research Center reported that the share of two-parent households with a breadwinner father and a stay-at-home mom had fallen back to 26 percent. Overall, women today are only half as likely as in 1984 to leave their jobs after having a child.[41]

Contrary to widespread belief, the largest proportion of stay-at-home moms in the U.S. population today is found among women married to men in the bottom 25 percent of wage earners, not the top 25 percent. Often these women want to work but cannot earn high enough wages to pay for transportation and child care. They are younger and less educated than the average employed woman, and many are immigrants. Educated professionals are actually more likely than other women to return to work soon after having a child.[42]

Affluent, highly educated stay-at-home moms exist, of course, but they comprise a much smaller group. Some did not so much opt out as get pushed out of their jobs by the refusal of employers to grant requests for more work-family flexibility. Others stay home because they are married to men whose high earnings depend upon exceptionally long workweeks, making it almost impossible to manage a household without one person at home full-time.[43]

In 2012, the recurring assertions that women were backtracking from the pursuit of financial independence were briefly interrupted by two books that proclaimed “the end of men” and the emergence of women as “the richer sex.” Both books correctly noted the impressive advances in women’s earnings, achievements, and aspirations since the 1970s but downplayed the fact that women still make up the majority of low-wage workers in the United States and still earn less, on average, than men working similar hours with similar levels of education.[44]

The woefully inadequate work-family policies prevalent in the United States explain a good part of this wage gap and may impose an upper limit on the workforce participation of women. Today every other wealthy country guarantees paid leave to new mothers, and most also offer paid leave to fathers. In addition, they mandate annual paid vacations for workers. The United States offers none of these benefits, which helps explain why between 1990 and 2010, the United States fell from sixth to seventeenth place in female labor force participation among twenty-two developed countries in the Organization for Economic Cooperation and Development.[45]

Chapter 8 details the main changes in marriage, sex, reproduction, and life-course patterns from the late 1800s to the early 1990s. These changes have continued in subsequent decades, with a few twists that have shifted attention from teenagers to college students. In 2014, teen childbearing reached record lows for all racial and ethnic groups, in large part because teens had started using contraception more consistently. But teenagers have also been delaying sexual initiation longer than they did in the late 1980s and early 1990s.[46]

Perhaps as a result, in recent years there has been less public anxiety about teen sexuality and more about the hookup scene on college campuses. As women and men delay marriage and parenthood in order to complete their schooling and launch careers, new questions arise regarding how young people should manage a prolonged period of sexual maturity and activity while they may be reluctant to embark on a serious relationship or feel they don’t have the time to invest in one.

Hookups are one way many students deal with this issue, sometimes successfully, sometimes not. But shaming young women for having sex works no better than shaming them for not having it. In fact, the cult of purity embraced by some young women and the “Girls Gone Wild” spring break exhibitionism embraced by others are equally limiting for women, since each in its own way defines a woman’s value by her sexuality.[47]

Anxious hand-wringing and lurid headlines should not delude us into thinking that young people are destroying their chances for lasting relationships. This generation did not invent the “one-night stand,” and dating and long-term relationships continue on college campuses. Over the long run, college graduates are more likely to marry than any other group. Hookups fill some of the space in between, though less of that space than is often assumed.[48]

One recent study found that fewer than half of all campus hookups involved sexual intercourse, and when intercourse did take place, it was mostly between people who had hooked up before. Sexual intercourse occurred in less than 30 percent of the hookups between people connecting for the first time.[49]

Between 2005 and 2011, sociologist Paula England directed an online survey of over 20,000 students at twenty-one four-year U.S. colleges and universities, asking about their experiences with dates, hookups, relationships, and sex. England has documented many gender inequities in hookups, including a significant “orgasm gap.” There is also a clear double standard, she reports, with women judged more harshly than men for having casual sex or for having sex with numerous partners. And because hookups are often preceded by heavy drinking, women risk experiencing sex when they are too incapacitated to actually consent or are too drunk to enjoy it if they do consent. Nevertheless, almost as many women as men reported that they enjoyed their last hookup. And a 2015 survey by Arielle Kuperberg and Joseph Padgett found that a slightly higher percentage of men than women expressed the desire for more opportunities to find someone with whom to initiate a relationship.[50]

Forcible assault and incapacitated nonconsensual sex on college campuses have been much in the news recently. In a 2015 survey of 150,000 students conducted by the Association of American Universities, 27.2 percent of female college seniors reported that, since entering college, they had experienced some kind of unwanted sexual contact. This was widely reported to mean that one in five college students had been victims of rape and sexual assault, even though the authors of the report warned against such a “misleading” conclusion. The survey lumped together sexual assault and sexual misconduct (which included unwanted sexual touching, kissing, or groping or attempts at sexual intimacy while the woman was still deciding).

All these actions represent a disrespectful approach to women and an assumption of masculine sexual entitlement, but we should note that less than 14 percent of the survey participants reported experiencing penetration, attempted penetration, or oral sex, whether by force or while incapacitated by drugs or alcohol. Furthermore, the response rate was very low—only 19.3 percent. This probably selected for a higher-than-average proportion of women who had had bad experiences, since such women tend to be more motivated to report them. None of these points should be taken to minimize the problem, but the more precise we are about the issues we face, the more likely we are to develop effective solutions. And we should never forget that the most frequent victims of sexual violence and partner abuse are women in low-income communities who have not had the opportunity to attend a four-year college. A 2014 White House task force report reviews promising programs that have been developed in high school and middle school settings and might be applied more widely both in low-income communities and on college campuses.[51]

Since I wrote this book, two changes in the life course have become increasingly evident. One is the growing length of time and variety of paths that young people take to reach the traditional markers of adulthood, such as establishing an independent residence, settling into a line of work, and getting married. The other is the extension of the active life span, which has created a new diversity of living arrangements and behaviors among people in their sixties and older.

During early adulthood, we have seen the development of a new stage of life in which many young people free themselves from parental supervision or control but postpone settling into other clear-cut and relatively permanent roles, responsibilities, and stable social networks. Compared to fifty years ago, far fewer men and women today have completed their education, achieved financial and residential independence from their parents, gotten married, and committed to a line of work by their mid-twenties or early thirties. Almost 40 percent move back home at least once after first moving out. Many are not even in a committed long-term relationship, with or without a wedding ring.[52]

Some observers view this prolongation of the period between adolescence and traditional adulthood positively, as an unprecedented opportunity for exploring identity, developing self-confidence, and building social networks. Others view it as a self-indulgent refusal to “grow up,” a reflection of rampant narcissism produced by overprotective helicopter parenting.[53]

But we saw a similar development in the second half of the nineteenth century. As a long-established agrarian and village way of life was disrupted by the spread of wage labor, many young people also delayed marriage to what were then unprecedented ages, changing jobs and residences as frequently as most young people do today. And the “overdependence” on parents that many criticize in young people today seems fairly mild in comparison to the nineteenth-century intensity of mother-child ties. Young men carried pictures of their mothers off to war and wrote poems about missing the “caress” of their mother’s hair against their cheek. According to historian Susan Matt, many soldiers suffered such severe homesickness during the Civil War that military officials prohibited bands from playing “Home, Sweet Home” for fear it might make young soldiers literally ill from nostalgia for mother and home.[54]

In many ways, today’s delays in independent residence, long-term job commitments, and marriage are a rational response to the complicated socioeconomic trends I describe in my epilogue. Despite the excesses of some helicopter parents, whether those involve pushing their kids too hard or indulging them too much, youths whose parents subsidize or otherwise assist them during this period of life generally end up doing better in the long run than their counterparts who do not receive such parental assistance.[55]

The later stages of adulthood have also become more prolonged and individualized. Older adults are now more likely than in the past to be living with a spouse, largely because of increases in the life span. But the proportion of older adults who live together without marriage has increased fourfold since the 1960s. Meanwhile, adults over age sixty who do not have a partner are much more likely than in the past to live alone rather than with relatives. In many cases this is their preference, facilitated by access to Social Security and Medicare.[56]

The increase in the active life span is surely welcome, but it has made staying together “until death do us part” a bigger challenge than the past. Researchers Susan L. Brown and I-Fen Lin report that the divorce rate of people aged fifty and over has doubled since 1990. Today one in every four people who divorce is over age fifty, and nearly one in ten is sixty-five or older. As with younger divorces, the majority of these divorces are initiated by women, despite their greater financial vulnerability.[57]

For some individuals, divorcing at this age offers the chance to reinvent themselves. Today a person still healthy at sixty-five can look forward, on average, to another twenty years of life during which to pursue his or her interests. Many meet new partners through online dating, which has been a huge boon to people in their fifties and older.

But divorce at an older age also involves financial losses that can seldom be recouped so late in life, which is often a particular challenge for women, and it may deprive individuals of needed social support networks, which is often a particular challenge for men. Here too, the growing diversity of living arrangements and intimate relationships should cause us to rethink social policies that still assume the universality of lifelong marriage as the main source of caregiving.[58]

Chapter 9 discusses the cross-cultural variations and historical transformations in how “good parenting” is defined. It questions what Arlene Skolnick has called “the myth of parental omnipotence”—responsibility for everything good or bad about the way children turn out—along with the flip side of this idea, the notion that children today are beset with new dangers against which parents have little defense.

Some of the fears I discuss in this chapter have abated, but new ones have taken their place, many involving online predators and cyber bullying. These are real problems, but most researchers don’t believe they are worse than in the pre-electronic age. Stories about horrific but uncommon crimes can distract us from more widespread and preventable problems. Many experts, for example, worry more about well-meaning adults who amuse infants and toddlers with electronics, because early language acquisition and social learning depend heavily on a child’s social interaction with real people.[59]

Debates also surround the use of social media by teens and young adults, which has exploded since the early 1990s. According to a 2015 survey by the Pew Research Center, one-quarter of all teens aged thirteen to seventeen say they are online “almost constantly,” while another 56 percent say they go online several times a day. Some experts worry that the reliance on social networking instead of social interaction is eroding youths’ ability to read emotions and develop deep relationships with others. Others insist that we can use the technology to strengthen family ties and social relationships.[60]

An ongoing concern is whether preschool children suffer when their mothers are employed outside the home. As late as 1977, nearly 70 percent of Americans believed this was the case. By 2000, the public was evenly split on this question. And by 2012, only 35 percent believed this was true. Still, this means more than one-third of Americans think children are harmed by having an employed mother and/or by the use of child care. So it’s worth noting that recent research confirms the skepticism I expressed about such fears back in 1992.[61]

A 2008 review of more than seventy studies in the United States found that maternal employment had no significant negative effects on young children, although a 2010 study found that, on average, children fared slightly better if mothers worked fewer than thirty hours a week for the first six to twelve months after birth. Another study recorded small negative effects of informal home care, whether provided by relatives or nonrelatives, but discovered “no detrimental effects” of nonmaternal care for children enrolled in formal, center-based care.[62]

When mothers work nonstandard hours and shifting schedules, their children tend to score lower on certain cognitive tests. This may be due in part to greater maternal stress and exhaustion, but it is also because such work schedules make it more difficult to place children in professional child-care centers.[63]

Studies of children of working parents in the United States may be skewed toward the negative because of the lack of policies that ease a worker’s transition to parenthood. Almost one-quarter of new mothers in the United States go back to work only two weeks after giving birth. Such a short period at home makes it more difficult for a mother to learn her new baby’s cues, increases parental stress, and may well pull down the average adjustment scores.[64]

Researchers find more positive results when they look at countries that have better work-family policies and child-care regulations. In Britain, researchers who controlled for the education and household income of mothers found that children of two-earner families actually had higher measures of well-being than children of married one-earner families. And a 2013 study of 75,000 Norwegian children found no behavioral problems linked to the length of time children spent in day care.[65]

Such research reinforces the historical lesson of chapter 9, which is that children can thrive in a wide variety of caregiving arrangements. However, the consequences of family structure for childrearing remain controversial. Certainly, it is a challenge to raise a child by oneself or to renegotiate parental and partner relationships after a divorce. But many of the apparent effects of family structure are greatly reduced when we control for factors such as parental substance abuse, depression, unemployment, poverty, personality disorders, educational deficits, and aggressive or violent behavior—all of which increase the likelihood that people will not marry or will divorce but also have negative effects on child outcomes even when parents manage to stay together.

One recent study found that for children with well-educated mothers, being raised in a single-parent household or stepfamily conferred no disadvantages in school readiness compared to children of equally educated mothers in their first marriage. But among children of less educated mothers, those raised in single or remarried households had worse math and reading skills than children of continuously married mothers, perhaps because the less educated and lower-income mothers had fewer resources to compensate for having only one adult in the home or help them cope with the conflicts that often arise in the course of blending two families. Still, a 2015 study by sociologist Michael Rosenfeld found that the negative child outcomes often associated with single-parent families become statistically insignificant once the amount of family instability and number of family transitions is taken into account.[66]

Similarly, a recent British study found no statistically significant differences in the social and emotional development of children of married and cohabiting parents once they controlled for precarious financial situations, low education, likelihood of the pregnancy being planned, and relationship quality between the parents.[67]

It’s also important to remember that averages mask substantial variations. Since most people recover well after an event such as a death or divorce, even a small number who do poorly can create a false sense of risk for the whole category. This is why many researchers now spend less time comparing the average outcomes of different family structures and more time studying the variations, outliers, and divergent responses within each category, focusing on what processes are most helpful in different family configurations.[68]

For example, it is now clear that there is no such thing as “the” impact of divorce on children. One recent study found that while 24 percent of children experienced declines in reading scores following parental divorce, 19 percent experienced increases, while the remaining 57 percent showed no change. Problems such as aggressiveness and bullying increased among 18 percent of children following their parents’ divorce but declined for 14 percent. There was no change for the other 68 percent.[69]

During the run-up to the Supreme Court decision on same-sex marriage, much worry was expressed over whether children could be raised successfully by two parents of the same sex. We now have several high-quality, long-term studies showing that children raised by same-sex parents fare as well as those residing in different-sex-parent households in their academic performance, cognitive development, social development, and psychological health. They are no more likely to engage in early sexual activity or substance abuse than children of heterosexual couples. Most become heterosexuals, although they are more likely than children raised by different-sex parents to be open to the possibility of same-sex attraction. While they may face teasing or bullying, as many children do for many different reasons, the best protection against that is a supportive climate in schools and communities.[70]

I wrote chapter 10 to dispel some long-standing myths about black families. The racial landscape of the United States has become increasingly diverse in recent decades. In 1960, 85 percent of the population was white, 11 percent was black, less than 4 percent was Latino, and less than 1 percent was Asian or Native American. Only 6 percent of the population was foreign-born.

By 2012, the foreign-born and African Americans each comprised 13 percent of the population, and Latinos made up 17 percent. Asians were 5 percent, but since 2011, the number of Asian immigrants—primarily from India and China—has surpassed the number coming from Latin America. Since 2000, when individuals first became able to identify themselves as more than one race on the census, the number of individuals doing so has been growing three times faster than the population as a whole; they now comprise 7 percent of the population. Less than 2 percent of the population identifies as American Indian or Alaska Native, but this group too has been increasing at a faster rate than the population as a whole.[71]

While all minority groups must grapple with harmful stereotypes and varying degrees of economic disadvantage and outright discrimination—the poverty rates of Native Americans and Latinos, like those of African Americans, are especially high—the divide between blacks and whites remains particularly intractable. African American families still tend to be hit harder in financial crises and to recover more slowly. Between 2010 and 2013, during the recovery from the recession, child poverty dropped for all groups except blacks, leaving African American children “almost four times as likely as white or Asian children to be living in poverty . . . and significantly more likely than Hispanic children.” And by 2013 the wealth gap between black and white households was wider than at any time since 1989.[72]

There have certainly been noteworthy improvements for African Americans over the past half century, and there is much less societal tolerance for overt racism than in the past. In 1958, 94 percent of Americans disapproved of marriage between blacks and whites. As late as 1991, while I was writing the first edition of this book, less than half (48 percent) approved of such marriage, with 42 percent still disapproving. But by 2013, 87 percent of Americans said they approved of black-white marriage, with just 11 percent disapproving. And in 2008, American voters elected a black president, reelecting him in 2012.[73]

Already by 1992 we had seen the growth of a substantial black middle class and the accession of significant numbers of African Americans to leadership positions in economic, political, and cultural circles. The proportion of black urbanites living in conditions of racial isolation fell from nearly half in 1970 to one-third in 2010, and in the decade between 2000 and 2010 the number of “hypersegregated” metropolitan areas fell from thirty-three to twenty-one.[74]

But while the number of hypersegregated metropolitan areas has declined, the degree of segregation in cities that remain highly segregated, such as Baltimore, Birmingham, Chicago, Cleveland, and St. Louis, has hardly budged. Such racial segregation interacts with another form of hypersegregation that has surged over the past thirty years: neighborhood segregation by income. In 1970, residential income segregation was lower among blacks than whites. Today it is 65 percent higher. Almost one-third of blacks born between 1985 and 2000, compared to only 1 percent of whites, live in neighborhoods where 30 percent or more of the residents are poor. A 2012 report from the University of California, Los Angeles’s Civil Rights Project notes that the typical black student now attends a school where almost two-thirds of his or her classmates are low income.[75]

In some ways the progress attained by a layer of affluent blacks may have made the experience of such exclusion and material deprivation even more searing for residents of these poorest communities. But even higher-income African Americans have not attained equality with their white counterparts. Although middle-class blacks are now much more likely than in the past to live in racially mixed neighborhoods, they are also more likely to have low-income white neighbors than are white families with comparable middle-class incomes.[76]

Furthermore, virulent racist ideas are alive and well among a significant minority of Americans. According to surveys conducted between 2010 and 2014 by the NORC at the University of Chicago, 31 percent of the supposedly “postracial” Millennial generation believe that blacks are lazier than whites.[77]

Even well-meaning people continue to harbor misconceptions based on racial stereotypes. Few Americans realize, for example, that black fathers who live with their children are more likely than coresidential white fathers to bathe, diaper, dress, or help their younger children with the toilet each day and to help their older children with homework. In general, unwed fathers who do not live with their children do less for and with them than live-in fathers, and a higher proportion of black children than whites grow up in homes without a father present. But recent research confirms what I reported in 1992: nonresidential black unwed fathers are more likely than their white counterparts to visit and offer practical assistance to their children. And many people would be surprised to learn that out-of-wedlock birthrates for black women have been falling since 1990 and by 2010 were lower than at any time on record.[78]

In the 1980s and 1990s, there was much concern about an epidemic of “crack babies,” thought to have been permanently damaged by their mothers’ use of crack cocaine during pregnancy. The behavioral and cognitive issues exhibited by many inner-city kids were viewed as the inevitable result of their mothers’ addiction, which led to a wave of punitive legal actions against such women.

But long-term follow-up studies have shown that children from the same high-poverty areas who had not been exposed to cocaine in utero were equally likely to have developmental and intellectual delays as the babies born with cocaine in their systems. The big risk to all these children, researchers now agree, was—and remains—poverty itself, which is an especially powerful toxin for the developing brain.[79]

In chapter 11, I analyzed the “crisis of the family” as it presented itself at the beginning of the 1990s, arguing against the common perception that America’s social ills at that time stemmed from abandonment of “traditional” family forms. I suggested that we were actually in the midst of a major upheaval in the relationship between workers and employers forged in the wake of the New Deal and World War II. While the statistics in that chapter are now dated, they provide valuable evidence that the increasing inequality and economic insecurity we have seen in the twenty-first century are not a temporary result of the 2001 and 2007–2009 recessions but have deep structural roots. In my 2016 epilogue, I discuss how the growth of economic inequality has interacted with the decline in gender inequality to create a new pattern of gains and losses for American families and between men and women.[80]

NO ONE CAN PREDICT WHAT NEW FAMILY TRENDS AND INCIDENTS will capture media attention in coming years. But it is safe to say that many Americans will continue to interpret new developments in light of the historical myths discussed in this book.

My hope is that understanding the complexities of family life in the past, including the trade-offs, reversals, and diverse outcomes that have accompanied the changes seen in the past hundred years, will better prepare us to meet the new challenges and opportunities of the next century. Only when we have a realistic idea of how families have and have not worked in the past can we make informed decisions about how to support families in the present and improve their ability to support themselves going forward.

1. The Way We Wish We Were: Defining the Family Crisis

WHEN I BEGIN TEACHING A COURSE ON FAMILY HISTORY, I often ask my students to write down ideas that spring to mind when they think of the “traditional family.” Their lists always include several images. One is of extended families in which all members worked together, grandparents were an integral part of family life, children learned responsibility and the work ethic from their elders, and there were clear lines of authority based on respect for age. Another is of nuclear families in which nurturing mothers sheltered children from premature exposure to sex, financial worries, or other adult concerns, while fathers taught adolescents not to sacrifice their education by going to work too early. Still another image gives pride of place to the couple relationship. In traditional families, my students write—half derisively, half wistfully—men and women remained chaste until marriage, at which time they extricated themselves from competing obligations to kin and neighbors and committed themselves wholly to the marital relationship, experiencing an all-encompassing intimacy that our more crowded modern life seems to preclude. As one freshman wrote, “They truly respected the marriage vowels”; I assume she meant I-O-U.

Such visions of past family life exert a powerful emotional pull on most Americans, and with good reason, given the fragility of many modern commitments. The problem is not only that these visions bear a suspicious resemblance to reruns of old television series, but also that the scripts of different shows have been mixed up: June Cleaver suddenly has a Grandpa Walton dispensing advice in her kitchen; Donna Stone, vacuuming the living room in her inevitable pearls and high heels, is no longer married to a busy modern pediatrician but to a small-town sheriff who, like Andy Taylor of The Andy Griffith Show, solves community problems through informal, old-fashioned common sense.